Results

Task Completion Test

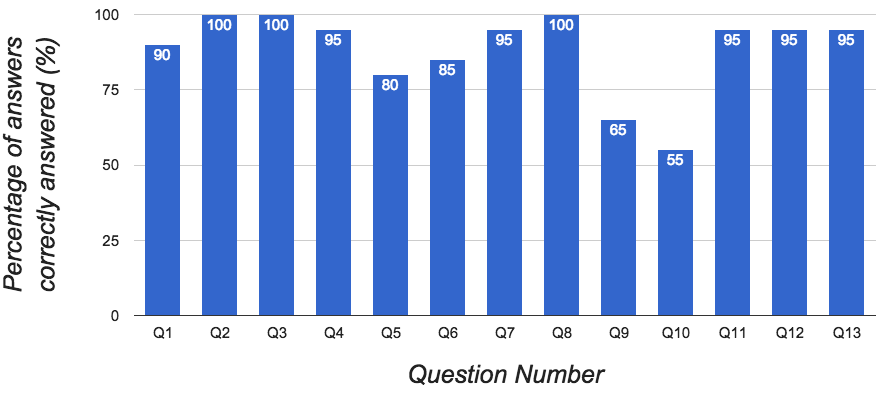

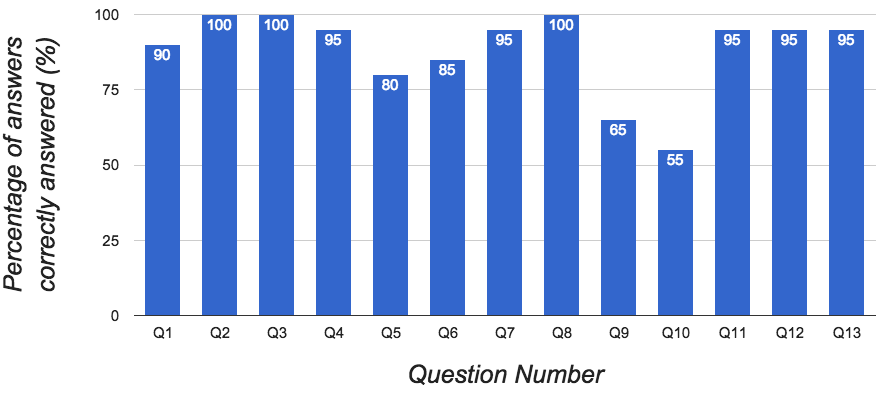

The results show 11 out of the 13 questions (84.6%) in the task completion test had been answered correctly by users with high success rates ( > 80%). These results reflect positively on the usefulness of the dashboard in supporting the tasks given to the users.

The results of the task completion test highlight problems with questions 9 and 10 of the task. Figure 1 shows a 65% and 55% successful completion rate of each question respectively. On inspection of the answers given to question 9, we discovered that 4 of the 7 incorrect answers given (57.1%) were the same. The question was “Which AS is the second largest source of traffic” and although worded correctly, there was no emphasis placed on “source”. If not read correctly, this could lead to the use of the wrong visualization in the dashboard to answer the question. Judging from the results, this was the largest cause of error. The network graph (which shows combined sent and received totals) was incorrectly used to answer the question where the row chart (which shows just the source total) would have given the correct answer.

Inspection of the answers given to question 10 revealed that 7 of the 9 incorrect answers (77.8%) were the same. Question 10 was “Which AS is the largest source of HTTPs traffic?” and the most common incorrect answer given was the AS that was the largest source of HTTP traffic. It appears that the largest cause of error for question 10 was a misreading of the question.

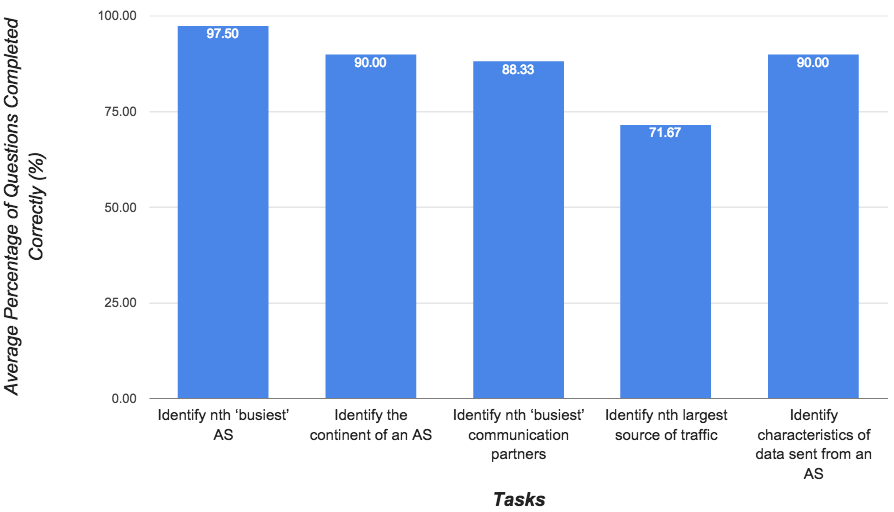

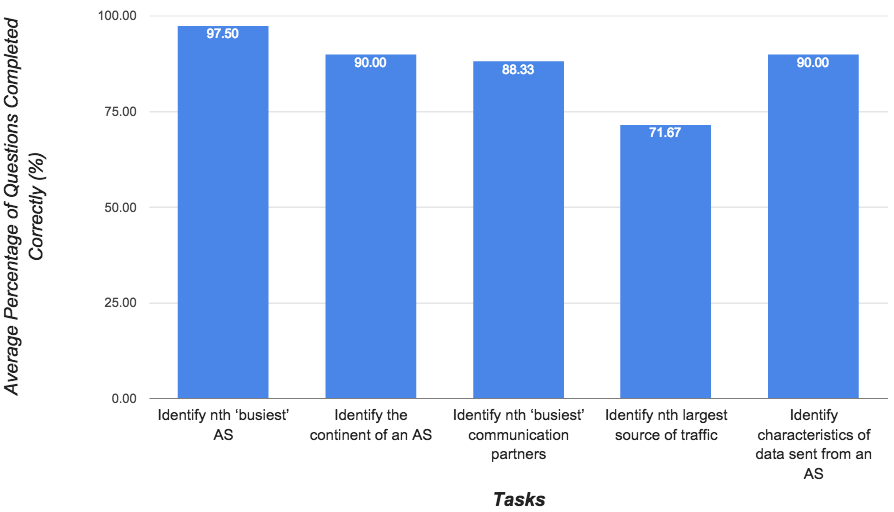

These questions are mapped to the “Identify the nth largest source of traffic” and “Identify the type and quantity of traffic sent from an AS” tasks. Analysis of the feedback given in the open-ended feedback questions highlights the theme of confusion over the wording of the questions of the task test, further supporting our theories about the low success rate for questions 9 and 10.

Usability

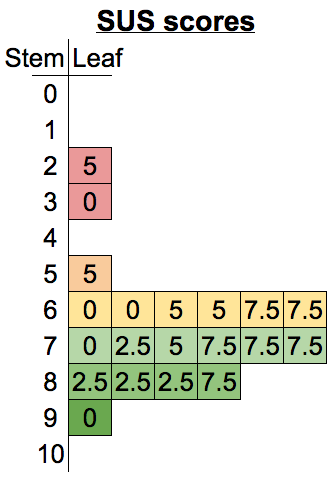

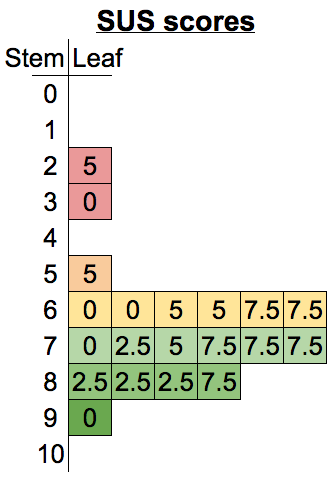

The average SUS score for our usability tests was 68.5. However, as Figure 8 highlights, our results include two concerning scores (25 and 30) which show that our system was rated very poorly by some users. SUS scores can be mapped to a scale of adjectives in order to bring more descriptive meaning to the SUS score given to a system. The average SUS score of our evaluation (68.5) fits the range described by “Good”. However, individual scores in our result set fit the “Poor” description with one of them sitting on the border between “Poor” and “Worst Imaginable”. It is clear that the usability of the dashboard requires further design and implementation iterations based on the current feedback.

Figure 6: Task Completion Test Results

Figure 7: Task Completion Test Results Grouped by Task

Figure 8: Stem and Leaf Plot of SUS Scores